Does your organisation embrace the

“Silent Killer”?

Silent Killer

I recently returned from my first visit to Muscat (Oman), spent a week at home, and then raced off to South Africa for a couple of weeks. It was fantastic to establish new safety relationships in Muscat. At a breakfast meeting we had well over 400 participants (this is despite only 48 hrs. notice of the event). I ran a specialized presentation of “Take a Second Look”. People were still heard to be talking about the power of “human error” as they were getting into their cars.

During the visit to South Africa I had quite a few opportunities to sit down and just “chat” Safety with many people, from all sorts of backgrounds. This is the very best part of travelling – for me it is always about establishing relationships with people and having the opportunity to share.

Anyway, there I am travelling to Capetown having a chat to my very good friend, Francois Smith (SAACOSH). Over the sounds of the engines, the flaps being adjusted, and the wheels coming down – we started talking about how our world of safety is so focused on measuring failure.

When people talk about “Safety”, and the performance of their organisations, what are the things they talk about?

You will hear people talk about their Lost Time Injury Frequency Rate (LTIFR), or they might even talk about their Medical Treatment Injury Frequency Rate (MTIFR) – as if the last one is an improvement. Both these numbers are, quite often, corrupt abstractions.

You will hear people talk about their Lost Time Injury Frequency Rate (LTIFR), or they might even talk about their Medical Treatment Injury Frequency Rate (MTIFR) – as if the last one is an improvement. Both these numbers are, quite often, corrupt abstractions.

So what do they really measure?

They measure the failure of your “system”. Do they do any more than that? Afraid not!

Click Here to read the authors article “LITFR, MTIFR, TRIFR and other dodgy digits. It’s time to think again, and again, and again.” published on Linkedin.

What is the difference between an LTIFR of 4.2 and 1.8?

Do we even know?

Not really.

The best we can “assume” is that there are a few less system failures happening – that are resulting in a few less injuries. Even that is a bit of a stretch. After all, by the time something becomes an LTIFR statistic (or MTIFR), there have likely been so many system failures preceding it, that it beggars belief. Indeed there are many covert failures of your safety systems occurring regularly – that you are not even aware of. You are not aware of them because the degree of “intrusion” into those metrics that you are mainly focused on (failure based reductionist numbers) is not great enough to trigger an alarm. Nonetheless your “system” then accepts that failure as “normal”. The longer it continues without a visible event, of some sort, the greater it becomes “normal” – and part of the “way things are done around here”. We call this the Normalisation of Deviance (Failure). Now if you thought this was bad enough – Sorry, it gets worse! The new “normal” for your operations becomes one that is functioning in a failure state. It then becomes exponentially difficult to try and redirect it to a more proactive and healthy manner of functioning.

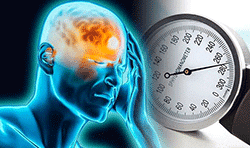

Another way of thinking about this could well be this. Imagine you have the odd headache. Nothing too troubling, you deal with it by using the odd headache pill. Occasionally when you stand up too quickly you feel a little light on your feet. Again, not very often at all. A few weeks ago you sneezed and you experienced a bit of a nose bleed. You did the “magic touch” on the bridge of the nose and all is good. You have just experienced three (3) of the key symptoms of “The Silent Killer” – Hypertension. Now for several months, at least, your blood pressure was too high (and at times too low). You were just unaware of it. For many people the experiences I have briefly described above would not result in a trip to the Dr. If they were male, almost certainly so. You would not imagine the number of people who, seemingly healthy, die of heart attack or stroke. They then do a post-mortem and find evidence of long term undiagnosed (so obviously untreated) Hypertension. Clearly, all too late.

Another way of thinking about this could well be this. Imagine you have the odd headache. Nothing too troubling, you deal with it by using the odd headache pill. Occasionally when you stand up too quickly you feel a little light on your feet. Again, not very often at all. A few weeks ago you sneezed and you experienced a bit of a nose bleed. You did the “magic touch” on the bridge of the nose and all is good. You have just experienced three (3) of the key symptoms of “The Silent Killer” – Hypertension. Now for several months, at least, your blood pressure was too high (and at times too low). You were just unaware of it. For many people the experiences I have briefly described above would not result in a trip to the Dr. If they were male, almost certainly so. You would not imagine the number of people who, seemingly healthy, die of heart attack or stroke. They then do a post-mortem and find evidence of long term undiagnosed (so obviously untreated) Hypertension. Clearly, all too late.

That should be very scary! Both from an organizational and personal perspective.

It stands to reason though, that when the hidden system failure/s becomes visible, we are on the road to system improvement (recovery). If only it were that easy?

What then happens is that the visible system failure (the injury) may trigger some sort of incident investigation. The “team” then locates a couple of factors and decides they were the “cause”. I am sure you have heard of the infamous Root Cause Analysis. Here’s the rub my friends. The “team” is almost always universally wrong! Why, because they nearly always identify surface factors – things that are relatively easily identified. They rarely dig down into the real underlying factors that have contributed to the business (and the people within it) operating/behaving/accepting in particular ways.

To read more about the author of this article just Click Here.

“Most organizations operate in failure states and that just remains invisible because bad stuff is not happening. We might call that the ‘normalization of deviance’ and, make no mistake, it will kill.”

This situation only gets worse.

The organisation then may adopt/adapt procedures, counsel/train employees, do all sorts of other things – based upon a mirage. Yes, they see the palm trees, and they start heading toward the oasis – only to find it’s not there when they arrive. I have seen so many organisations get caught up in this wasteful journey – only because they got caught up in seeing their system failures as a measure of success.

Worth a thought?

Ricky, Atlanta

![]()

“I was fortunate to attend Transformational Safety’s Anatomies of Disaster Program. This was amongst the most powerful two days I have ever spent in a room. From the outset David Broadbent set the scene by dedicating the program to the late Rick Rescorla – the man who is credited with saving over 2700 lives on 9/11. Throughout the two days David would often respectively reflect and remember those who had died, or been injured, in the disasters we explored. He would say, and I will never forget, “…we must always remember those that lost their lives lift us up into the light of understanding”. I learnt so much. HRO, Resilience Engineering, Critical Incident Stress Management (CISM) and more. Those of us who were there are still talking about it…… Thankyou David